![]()

Wiley, a leading academic publisher of STEM journals, recently announced its decision to enter into a five-year agreement with DEAL Consortium in Germany. This consortium represents a collaboration of more than 1000 academic institutions of Germany.

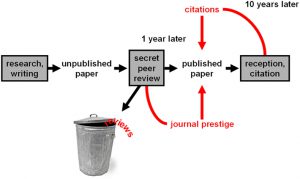

The drift in scholarly communications has favored open access publishing, and the evolution of the hybrid model of publishing. The needs of the scholarly community will be better addressed by this partnership.

Thanks to this five-year deal, Wiley will now offer open access articles freely to authors of leading institutions in Germany. Thus, the readability and access to content would increase from Wiley’s portfolio of journals. Let us understand how this agreement benefits the research ecosystem of Germany.

The deal will facilitate a better understanding of all the scholarly institutions associated with OA publishing in Germany. The investment needs of journals would be recognized as they deliver high quality content with greater impact to researchers.

The deal also enables the development of a better infrastructure in terms of workflows. Thus, both the parties have agreed to support readers, authors, and librarians involved in the OA movement. Finally, all the institutions of the consortium will benefit from training sessions and workshops held by the well-established team of Wiley.

The deal between DEAL Consortium and Wiley is going to support a new wave of Open Access movement in Germany. This would be immensely beneficial to gain path-breaking results in the research community of Germany.

Kudos to the five-year agreement that dwells on supporting both the publishers and researchers associated with OA movement. The collaborative approach of Wiley has been much appreciated by DEAL Consortium of Germany. The cooperation model is sustainable.